Open-Source vs Proprietary LLMs

DeepSeek's Disruption & the Future of Generative AI

Executive Summary

Open-source vs. proprietary large language models (LLMs) represents a critical debate in the field of artificial intelligence, focusing on two distinct approaches to developing and deploying AI technologies. Open-source LLMs such as Llama and DeepSeek are collaboratively built systems that grant users access to their source code and data, fostering transparency, customization, and community-driven innovation. In contrast, proprietary LLMs, developed by private companies such as OpenAI, Google, and Anthropic, are closed systems that offer high performance but restrict user access to underlying architectures and data, often leading to concerns about cost and flexibility. Open-source models are lauded for their cost-effectiveness and adaptability, appealing to organizations eager to experiment with generative AI without significant financial barriers. However, challenges such as inconsistent documentation and the need for specialized expertise can hinder their broader adoption. Proprietary models, on the other hand, are recognized for their cutting-edge performance and ease of integration, but they often come with high licensing fees and limited opportunities for customization, which can stifle innovation in specific use cases. Notably, the discussion surrounding open-source versus proprietary LLMs is rife with controversies, particularly concerning data privacy, security risks, and intellectual property. Open-source advocates argue that transparency can enhance security and trust, while critics highlight potential risks associated with unregulated access to AI technologies. Meanwhile, proprietary model supporters emphasize the necessity of robust support and performance guarantees that can be crucial for enterprise-level applications. As organizations navigate the complex decision of choosing between open-source and proprietary LLMs, factors such as cost, performance, customization, and community support play pivotal roles. The outcome of this choice will significantly impact the future trajectory of AI development, with implications for innovation, ethical considerations, and the overall democratization of AI technologies.

Open-source vs Proprietary LLMs

The choice between open-source and proprietary large language models (LLMs) hinges on several critical factors, including cost, control, performance, and specific use cases.

Open-source LLMs are often seen as a more economical choice compared to their proprietary counterparts. Organizations using open-source models typically incur lower costs, mainly comprising hosting fees, as opposed to the higher margins and licensing fees associated with proprietary models. Moreover, the absence of recurring costs allows enterprises to allocate resources more effectively, enabling them to invest in customization and further development. On the other hand, proprietary LLMs may come with additional expenses tied to API access and token usage, which can add up significantly based on the volume of data processed.

One of the most significant advantages of open-source LLMs is their flexibility. Users have the freedom to modify and customize these models to meet specific requirements, which can lead to enhanced performance for particular tasks. This capability is particularly beneficial for businesses looking to tailor models to specialized datasets or unique applications. In contrast, proprietary LLMs often provide limited customization options and may require adherence to strict licensing agreements that restrict modifications.

Open-source LLMs benefit from a robust and active community of developers and users who contribute to ongoing improvements and troubleshooting efforts. This community-driven innovation can lead to rapid advancements and the continuous evolution of features. Conversely, proprietary models may offer dedicated support and established performance but can lack the collaborative spirit that characterizes open-source projects.

When it comes to performance, proprietary LLMs often excel in metrics such as speed, security, and dedicated support, making them attractive to businesses with stringent compliance requirements. These models are generally backed by major technology firms, which can provide assurances regarding reliability and legal protections for enterprises. However, open-source models have shown strong performance across various natural language processing tasks and continue to improve, closing the gap with proprietary solutions.

While open-source LLMs offer many advantages, they are not without challenges. Integrating these models into existing systems can lead to compatibility issues and the potential lack of standardized APIs. Additionally, concerns regarding intellectual property can arise if models are trained on proprietary or sensitive data, leading some organizations to hesitate in adopting open-source solutions. In contrast, proprietary models usually come with clear legal agreements that mitigate these concerns.

Integrating large language models (LLMs) into existing organizational frameworks presents a variety of challenges that must be carefully navigated to achieve effective deployment. A primary challenge lies in the establishment of an efficient data pipeline and information management practice. Organizations must gather data from disparate locations, including data silos, on-premise systems, and cloud-hosted databases. This complex data landscape necessitates a re-evaluation of traditional concepts of data ownership and stewardship, emphasizing the need for clear strategies to manage these resources effectively. Ensuring data cleanliness, proper labeling, and structuring are critical tasks for the successful application of AI technologies.

Security concerns are paramount during the integration of LLMs, especially when third-party tools and services are involved. Each new integration can introduce risks such as prompt injection vulnerabilities and misconfigured permissions. The interconnected nature of these tools means that a breach in one system could potentially compromise the entire network. Therefore, a robust security strategy, including testing for vulnerabilities and validating access permissions, is essential to mitigate these risks while harnessing the innovative capabilities of LLMs.

Another significant challenge is balancing performance with accuracy. Engineers often grapple with the tradeoff between response time and answer quality, particularly in retrieval-augmented generation (RAG) applications, where delays can negatively impact user experience. Achieving seamless integration into existing applications requires careful planning, from defining use cases to ensuring compliance with privacy laws, which can be a complex undertaking.

The lack of clear documentation regarding the deployment and use cases of LLMs can hinder effective utilization. Comprehensive documentation is vital for users to navigate the functionalities of LLMs successfully. Additionally, responsible oversight is crucial; without a centralized authority overseeing deployment, it becomes difficult to enforce safeguards against misuse, leading to fragmented governance across different models and use cases.

Resource limitations pose a further barrier, as insufficient computational resources can lead to performance issues such as memory errors. Users must ensure that their infrastructure meets the necessary requirements to run LLMs effectively, which adds an additional layer of complexity to the integration process.

As organizations continue to explore the capabilities of LLMs, they must remain cognizant of these challenges and strive for a balance between innovation and security. Developing a clear understanding of the potential pitfalls and planning accordingly will be crucial for the successful adoption of LLMs within enterprise settings.

DeepSeek: The Poster Boy of Open-Source LLM

The recent release of DeepSeek caught everyone by surprise and caused what Marc Andreessen called a “Sputnik Moment”, wiping $1tn from the Nasdaq Stock Index.

DeepSeek is an open-source large language model (LLM) developed by a prominent Chinese AI lab, designed to perform diverse natural language processing tasks such as reasoning, coding, and content generation. Distinguished by its cost-effectiveness, scalability, and versatility, DeepSeek offers performance levels that rival those of proprietary models like OpenAI's at a fraction of the cost, making advanced AI tools accessible to a broader audience, including startups and academic institutions. This democratization of technology is particularly significant as organizations increasingly seek affordable alternatives to high-cost proprietary solutions in the fast-evolving field of artificial intelligence. While DeepSeek presents numerous benefits, including enhanced customization, privacy control, and collaborative innovation, it is not without limitations. Designed for both individual developers and enterprise applications, DeepSeek can be scaled for large-scale deployments. Additionally, enterprises have the flexibility to fine-tune DeepSeek models using proprietary data, ensuring that the models are relevant and compliant with industry-specific standards.

DeepSeek has undergone several iterations, with each version introducing notable innovations. In particular, the release of DeepSeek V3 showcased advancements in multi-head latent attention (MLA) in contrast to other models’ single-head attention mechanism, which enhances the model's ability to process complex data by allowing it to focus on multiple aspects simultaneously. As noted by Alberto Peliccione in his blog, “MLA compresses the KV cache into a lower dimensional space, resulting in much lower memory usage — 5% to 13% that of a vanilla attention! — while maintaining performance.” In contrast, OpenAI’s o1/o3 models and Google’s Gemini 2.0 rely on more conventional attention mechanisms, which continue to face scalability issues in handling ultra-long contexts. MLA provides DeepSeek with a significant advantage in tasks requiring extensive contextual reasoning, such as legal document analysis and long-form content generation.

Another important innovation introduced by DeepSeek is the auxiliary-loss-free load balancing strategy, which ensures an even distribution of computational loads without negatively impacting performance. This innovation enhances training efficiency by reducing the computational overhead of managing auxiliary losses; and it achieves superior results on benchmarks like MATH-500 and MMLU by minimizing the trade-off between load balancing and accuracy.

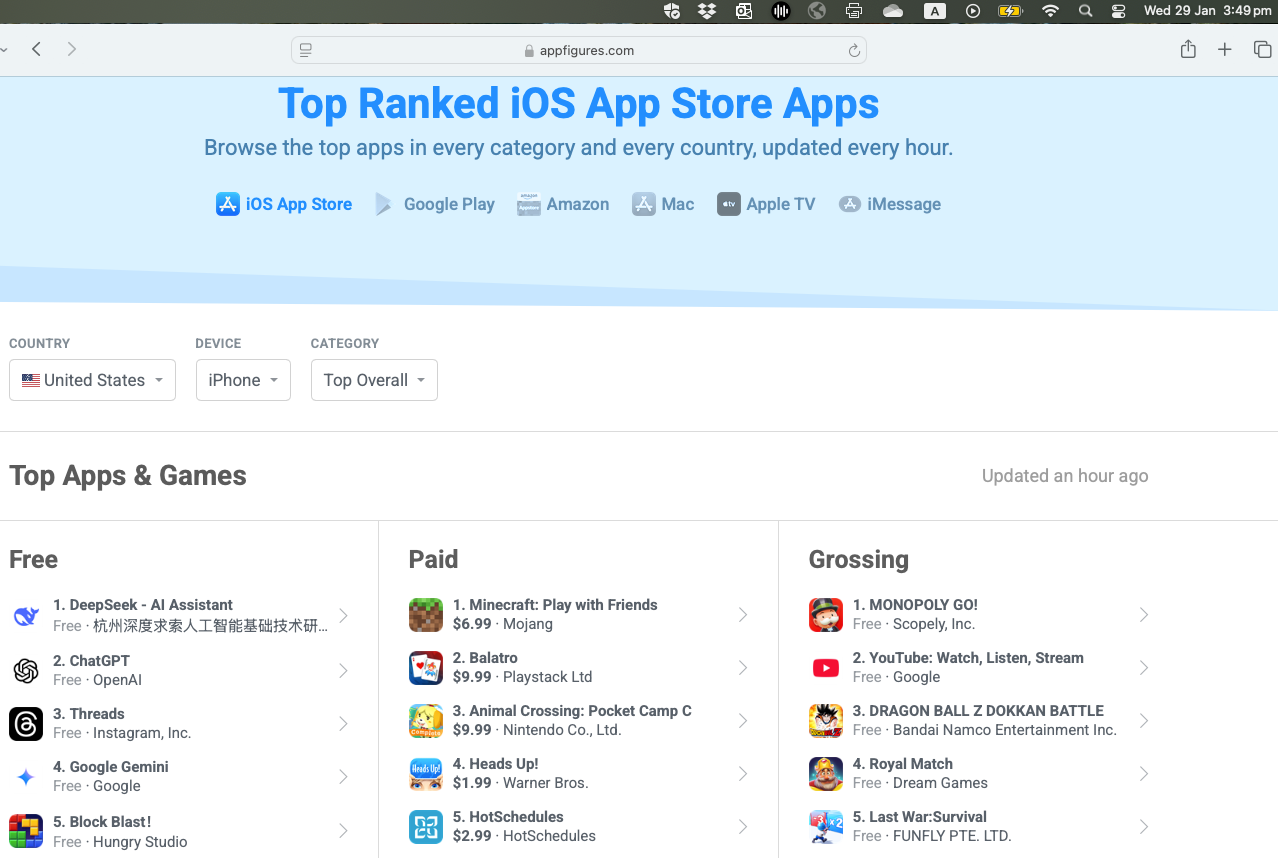

While DeepSeek is currently the #1 free app on the Apple App Store, ahead of TikTok and ChatGPT according to AppFigures on 29 January, 2025 (see figure below), the Chinese startup is facing significant challenges including restricted access to compute resources and high-quality datasets. China has massive amounts of text data, but it often lacks diversity and cleanliness, making it less suitable for training LLMs.

Future Trends

As the landscape of Large Language Models (LLMs) continues to evolve, the future holds significant promise for both open-source and proprietary models. The ongoing development of these technologies suggests that the integration of LLMs into various sectors will accelerate, bringing forth innovative applications and transformative solutions across industries.

The open-source community is witnessing rapid expansion, attracting a diverse array of contributors who enhance existing models and introduce novel ideas. Reports indicate a notable shift in preference towards open-source LLMs, with a significant percentage of enterprises expressing intentions to increase their usage of these models. The democratization of AI through open-source initiatives is expected to drive innovation and collaboration, allowing more stakeholders to participate in LLM development and deployment.

As enterprise interest in open-source models grows, a more balanced ecosystem is anticipated, where open-source and proprietary models coexist and complement each other. Current trends suggest that the market, which was heavily dominated by closed-source models, may soon experience a shift towards equal distribution between open and closed models, fostering healthy competition that could benefit users and developers alike.

DeepSeek was built on Meta’s Llama model, which means others can follow suit. Most importantly, resource rich corporations can now emulate DeekSeek’s innovations while also cutting down costs. In fact, the most important disruption effected by DeepSeek is cutting down costs. This will only accelerate innovation such that big players like OpenAI, Anthropic and Google will have to compete on price now. This is not a simple case of disruptive innovation in the style of Clayton Christensen’s famous model. This is disruption at breakneck speed with no clear winners given the distributed efforts of both corporate entities and open-source communities.

Indeed the development of open-source LLMs is proceeding at an unprecedented rate. As new models with improved capabilities are regularly released, ongoing evaluations will be essential to assess their real-world performance and effectiveness. Future work should focus on understanding the contributions of the open-source community—whether they primarily provide add-on, complementary solutions or also generate novel ideas that influence further research and development, as well as commercialization of new products.

Policymakers will play a crucial role in shaping the future of LLMs by establishing regulations that protect the public while promoting innovation and fair competition within the AI industry. Balancing these responsibilities will be vital for ensuring a sustainable future for both open-source and proprietary LLMs, fostering an environment conducive to continued advancements in technology.